Building a Scalable Serverless Payment Processing System with AWS Lambda, SQS, and Step Functions

Introduction

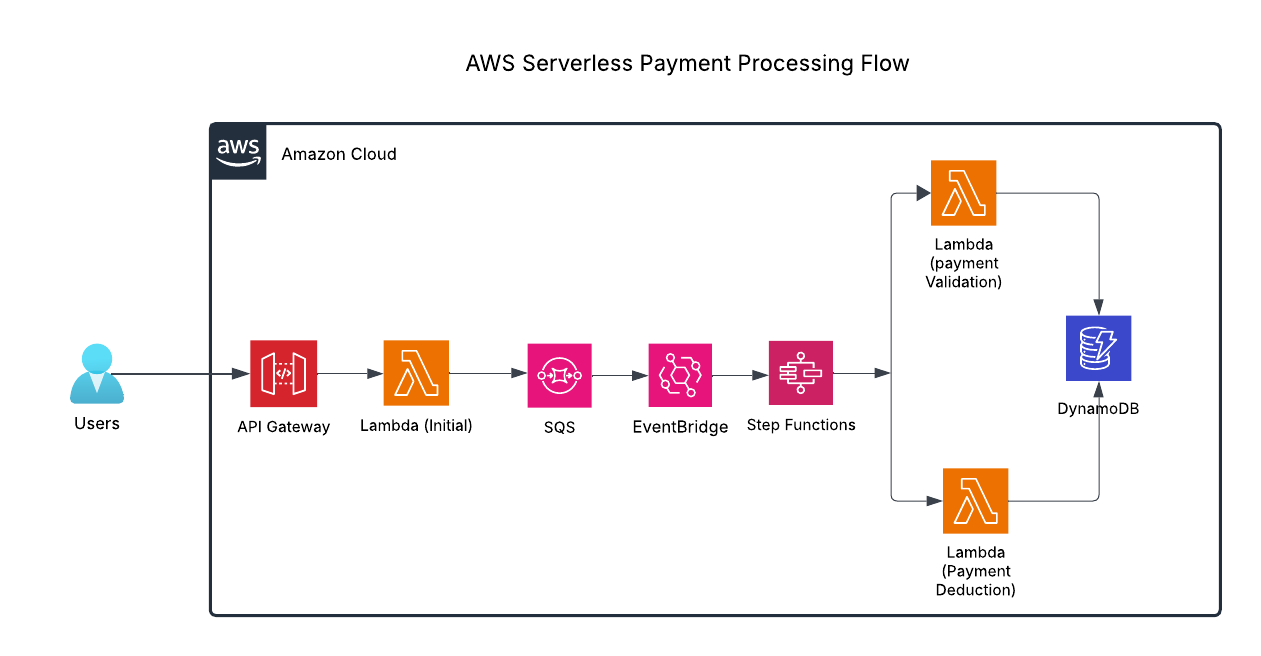

Payment systems need to handle millions of transactions smoothly and reliably. While serverless computing offers many benefits, it comes with its own set of challenges - like managing data states, finding bugs, and dealing with time limits. That’s why we’ve found a better way: by combining powerful AWS tools like Lambda, SQS, Step Functions, and DynamoDB, we can create a system that’s both robust and flexible, handling payments efficiently while keeping everything running smoothly.

Challenges and Solution

Building serverless applications can be tricky. They often face issues like handling long tasks, keeping track of what’s happening, and having parts that are too dependent on each other. This can make it hard to fix problems and grow your application.

But don’t worry! We’ve found a great solution using AWS tools. Step Functions helps manage workflows, SQS makes sure different parts can work independently, Lambda handles quick tasks, and DynamoDB keeps your data safe. Together, these tools make it easier to handle errors, see what’s going on, and grow your application when needed. It’s perfect for building powerful serverless applications that just work.

Prerequisites

- AWS account

- Basic knowledge of Python Programming Language

- Basic understanding of Cloud and Serverless Application Modal

Step-by-Step Implementation

Step 1: API Gateway → Lambda Function (Receiving Payment Requests)

API Gateway triggers a Lambda function to handle incoming payment requests. To implement this, we’ll define a resource in the template.yml file for the Lambda function with its role, configured to be triggered by a dynamic endpoint. The function sends transaction details to Amazon SQS for asynchronous processing.

ApiGatewayApi:

Type: AWS::Serverless::Api

Properties:

Cors:

AllowMethods: "'POST', 'GET', 'OPTIONS'"

AllowHeaders: "'Content-Type,X-Amz-Date,Authorization,X-Api-Key,X-Amz-Security-Token'"

AllowOrigin: "'*'"

InitialLambdaFunction:

Type: AWS::Serverless::Function

Properties:

Handler: <function_name>

CodeUri: <path_to_lambda_function>

Runtime: python3.10

MemorySize: 128

Timeout: 30

Environment:

Variables:

SQS_QUEUE_URL: !Ref SQSMessageQueue

Tracing: Active

Events:

ApiPOST:

Type: Api

Properties:

Path: /integrate/pay/{method}

Method: POST

RestApiId:

Ref: ApiGatewayApi

Role: !Sub ${SQSMessageIntegrationRole.Arn}

Step 2: Lambda Function → SQS ( Message Queue for Decoupling )

Similarly, we can define the SQS resource as below.

SQSMessageQueue:

Type: AWS::SQS::Queue

The lambda function does not have permission to access SQS directly. Therefore, we need to define the role to grant permission to lambda function to send message to SQS.

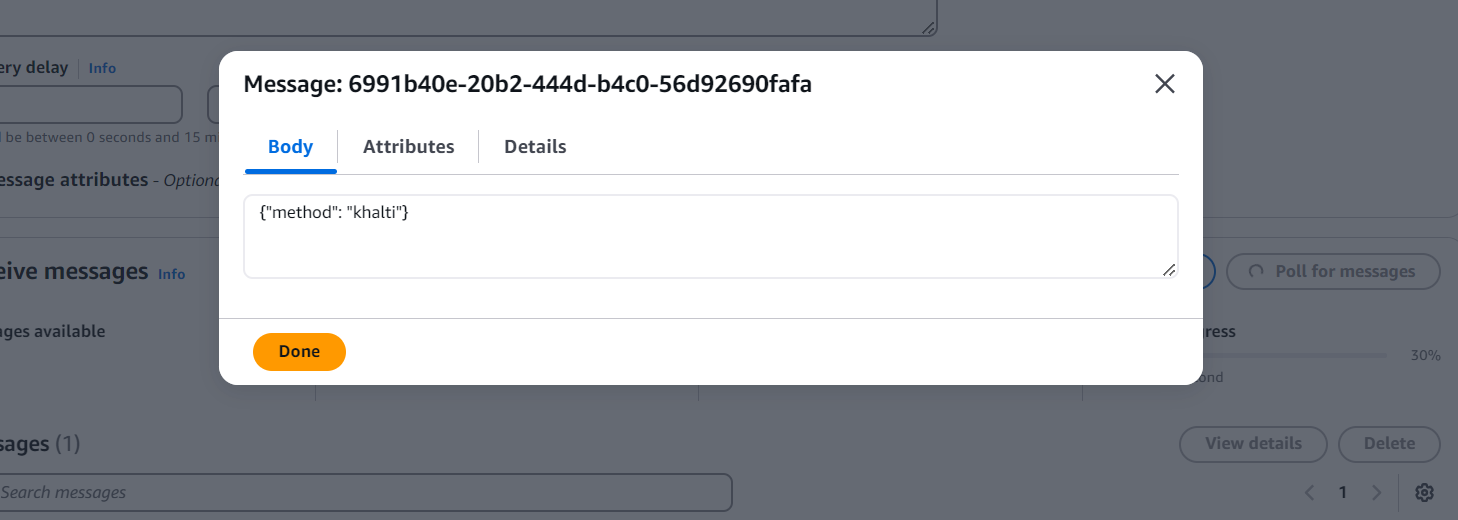

SQS ensures fault-tolerant, asynchronous message handling, preventing API Gateway timeouts.

SQSMessageIntegrationRole:

Type: AWS::IAM::Role

Properties:

AssumeRolePolicyDocument:

Version: "2012-10-17"

Statement:

- Effect: Allow

Principal:

Service: lambda.amazonaws.com

Action:

- sts:AssumeRole

Policies:

- PolicyName: logs

PolicyDocument:

Statement:

- Effect: Allow

Action:

- logs:CreateLogGroup

- logs:CreateLogStream

- logs:PutLogEvents

Resource: arn:aws:logs:*:*:*

- PolicyName: sqs

PolicyDocument:

Statement:

- Effect: Allow

Action:

- sqs:ReceiveMessage

- sqs:SendMessage

Resource: !Sub ${SQSMessageQueue.Arn}

Apart from SendMessage and ReceiveMessage, we can provide DeleteMessage action to allow deleting message of SQS by triggering lambda function.

With the above resource configurations we can now define the handler in python.

import json

import boto3

import os

SQS_QUEUE_URL= os.environ["SQS_QUEUE_URL"]

def lambda_handler(event, _):

method= event.get("pathParameters", {}).get("method")

sqs_client = boto3.client("sqs")

sqs_client.send_message(

QueueUrl=SQS_QUEUE_URL, MessageBody=json.dumps({"method":method})

)

Here, we use boto3 i.e. Toolkit of Amazon’s Python to work with the resources of AWS.

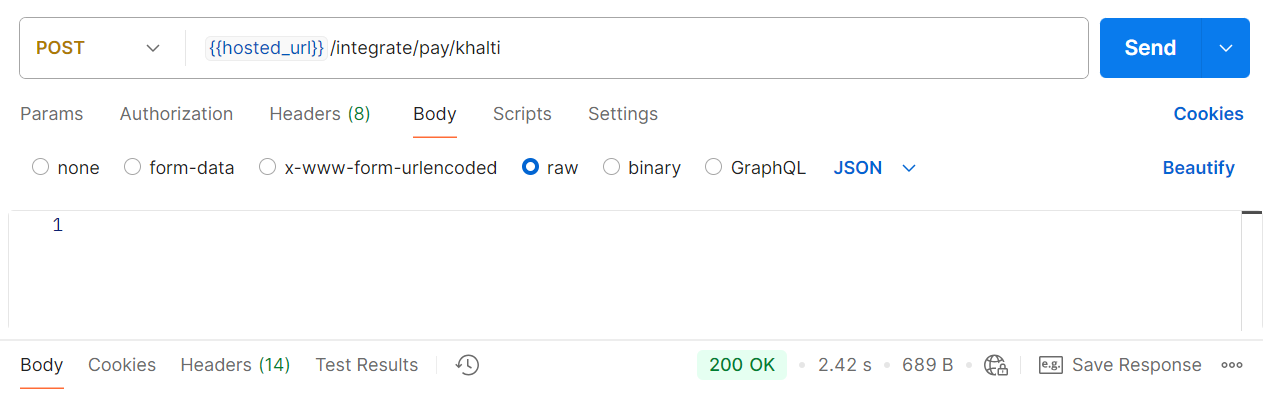

Here, we call an endpoint for initializing the lambda function that triggers the SQS.

Step 3: SQS → Step Function (Workflow Orchestration)

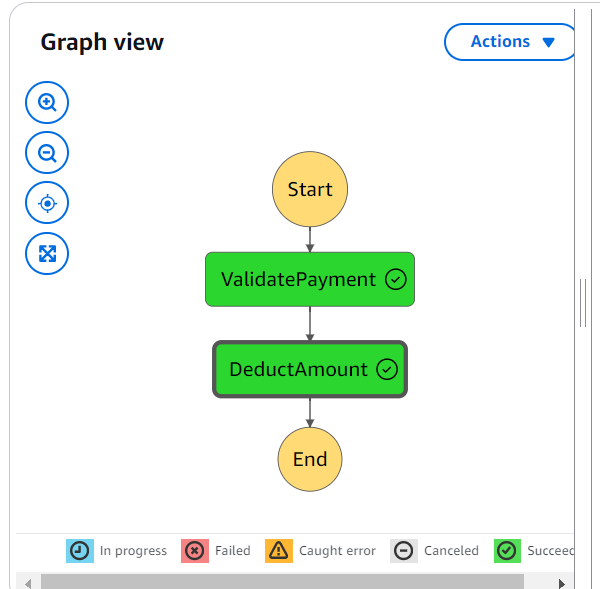

The importance of step function in AWS is it allows developers to implement logic in different functions orderly and separately. It also allows the parallel execution as well as easy to debug and change.

Basically, AWS Step Functions manage transaction state and automate workflow execution.

StateMachineProcess:

Type: AWS::StepFunctions::StateMachine

Properties:

StateMachineName: StateMachineProcess

DefinitionString: !Sub | <JSON_FORMAT_DEFINATION>

TracingConfiguration:

Enabled: true

RoleArn: !GetAtt StateMachineRole.Arn

Here, we use Amazon States Language to define the state machine in JSON format.

The JSON_FORMAT_DEFINATION is arranged as below.

{

"Comment": "Arrange multiple Lambda functions",

"StartAt": "ValidatePayment",

"States": {

"ValidatePayment": {

"Type": "Task",

"Resource": "${ValidatePayment.Arn}",

"Parameters": {

"Payload.$": "$"

},

"Retry": [

{

"ErrorEquals": ["Lambda.TooManyRequestsException"],

"IntervalSeconds": 2,

"MaxAttempts": 5,

"BackoffRate": 2.0

}

],

"Next": "DeductAmount"

},

"DeductAmount": {

"Type": "Task",

"Resource": "${DeductAmount.Arn}",

"Parameters": {

"Payload.$": "$"

},

"Retry": [

{

"ErrorEquals": ["Lambda.TooManyRequestsException"],

"IntervalSeconds": 2,

"MaxAttempts": 5,

"BackoffRate": 2.0

}

],

"End": true

}

}

}

Unlike SQS, the state machine requires permission to invoke lambda function. Hence, a role must be defined for the state machine.

StateMachineRole:

Type: AWS::IAM::Role

Properties:

AssumeRolePolicyDocument:

Version: "2012-10-17"

Statement:

- Effect: Allow

Principal:

Service: states.amazonaws.com

Action: sts:AssumeRole

Policies:

- PolicyName: CloudWatchLogs

PolicyDocument:

Version: "2012-10-17"

Statement:

- Effect: Allow

Action:

- "logs:CreateLogDelivery"

- "logs:GetLogDelivery"

- "logs:UpdateLogDelivery"

- "logs:DeleteLogDelivery"

- "logs:ListLogDeliveries"

- "logs:PutResourcePolicy"

- "logs:DescribeResourcePolicies"

- "logs:DescribeLogGroups"

Resource: "*"

- PolicyName: StepFunctionInvokePolicy

PolicyDocument:

Version: "2012-10-17"

Statement:

- Effect: Allow

Action:

- lambda:InvokeFunction

Resource:

- !GetAtt ValidatePayment.Arn

- !GetAtt DeductAmount.Arn

With the above configurations, state machine is granted access to invoke all the lambda functions within it. Also, we do need to define the lambda functions for the state machine.

ValidatePayment:

Type: AWS::Serverless::Function

Properties:

Handler: <function_name>

CodeUri: <path_to_lambda_function>

Runtime: python3.10

MemorySize: 128

Timeout: 30

Tracing: Active

Description: This is lambda function one

DeductAmount:

Type: AWS::Serverless::Function

Properties:

Handler: <function_name>

CodeUri: <path_to_lambda_function>

Runtime: python3.10

MemorySize: 128

Timeout: 30

Tracing: Active

Description: This is lambda function two

The key question is how to trigger the Step Functions state machine from SQS. So, we can use EventBridge Pipes to launch the state machine using messages from SQS by configuring the source and target.

SqsToStateMachine:

Type: AWS::Pipes::Pipe

Properties:

Name: SqsToStateMachinePipe

RoleArn: !GetAtt EventBridgePipesRole.Arn

Source: !GetAtt SQSMessageQueue.Arn

SourceParameters:

SqsQueueParameters:

BatchSize: 1

Target: !Ref StateMachineProcess

TargetParameters:

StepFunctionStateMachineParameters:

InvocationType: FIRE_AND_FORGET

EventBridge Pipe also requires a role to read from SQS and launch the State machine.

EventBridgePipesRole:

Type: AWS::IAM::Role

Properties:

AssumeRolePolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Principal:

Service:

- pipes.amazonaws.com

Action:

- sts:AssumeRole

Policies:

- PolicyName: CloudWatchLogs

PolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Action:

- "logs:CreateLogGroup"

- "logs:CreateLogStream"

- "logs:PutLogEvents"

Resource: "*"

- PolicyName: ReadSQS

PolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Action:

- "sqs:ReceiveMessage"

- "sqs:DeleteMessage"

- "sqs:GetQueueAttributes"

Resource: !GetAtt SQSMessageQueue.Arn

- PolicyName: ExecuteSFN

PolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Action:

- "states:StartExecution"

Resource: !Ref StateMachineProcess

Using EventBridge Pipe, the SQS message serves as input for the state machine’s initial Lambda function, which we can access through event parameters.

import json

# ValidatePayment

def lambda_handler(event, _):

body = json.loads(event["Payload"][0]["body"]) # Parse the body JSON string

method = body["method"] # Access the method key

return {"statusCode": 200, "body": json.dumps({"method": method})}

The output of ValidatePayment will be the input of DeductAmount.

import json

#DeductAmount

def lambda_handler(event, _):

body = json.loads(event["Payload"]["body"])

print(body)

The json.loads is used to parse the payload body. And as already mentions in the state machine, DeductAmount **is set as end and hence the step function ends here.

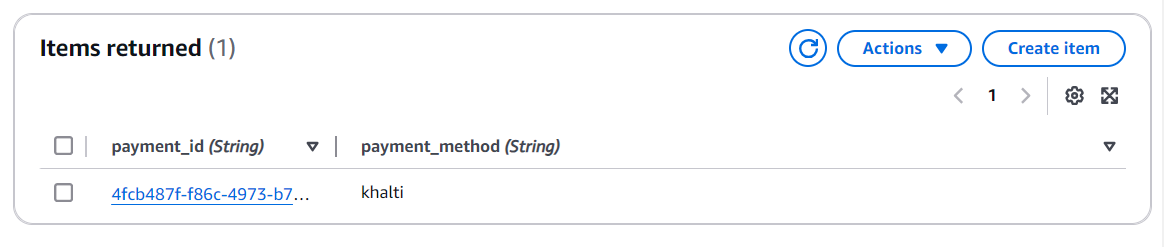

Step 4: Step Function → DynamoDB (Storing Transaction Data)

Amazon DynamoDB stores transaction details securely and supports high-performance queries.

DeductAmount:

Type: AWS::Serverless::Function

Properties:

Handler: <function_name>

CodeUri: <path_to_lambda_function>

Runtime: python3.10

MemorySize: 128

Timeout: 30

Tracing: Active

Description: This

Policies:

- DynamoDBCrudPolicy:

TableName: !Ref MethodTable

Environment:

Variables:

METHOD_TABLE: !Ref MethodTable

MethodTable:

Type: AWS::DynamoDB::Table

Properties:

TableName: MethodTable

AttributeDefinitions:

- AttributeName: payment_id

AttributeType: S

- AttributeName: payment_method

AttributeType: S

KeySchema:

- AttributeName: payment_id

KeyType: HASH

- AttributeName: payment_method

KeyType: RANGE

BillingMode: PAY_PER_REQUEST

The attribute name method_id defined as HASH key type is regarded as partition key which is required and RANGE key type is sort key.

import json

import boto3

import uuid

import os

METHOD_TABLE= os.environ["METHOD_TABLE"]

def lambda_handler(event, _):

body = json.loads(event["Payload"]["body"]) # Parse the body JSON string

method = body["method"] # Access the method key

id= str(uuid.uuid4())

methodDb= boto3.resource("dynamodb")

dynamo_table = methodDb.Table(METHOD_TABLE)

dynamo_table.put_item(Item={"payment_method": method, "payment_id": id})

return {"statusCode": 200, "body": json.dumps({"message": "Stored!!"})}

Here, the boto3 toolkit is used to access DynamoDB resource and store data. We can also update the table items using unique id generated using uuid.

Final Architecture Overview:

- API Gateway triggers Lambda, which sends requests to SQS.

- SQS forwards messages to Step Functions with EventBridge pipes, initiating workflow execution.

- Step Functions coordinate multiple Lambda functions for validation and processing.

- DynamoDB stores transaction details for record-keeping.

Conclusion

By integrating AWS Lambda, SQS, Step Functions, and DynamoDB, this serverless payment processing system ensures scalability, reliability, and efficiency. Furthermore, we can also configure Dead Letter Queue (DLQ) in SQS to capture the failed messages or the messages that cannot be processed.

References:

- https://stackoverflow.com/questions/75510471/how-do-you-create-a-resource-for-a-step-function-statemachine-using-yaml

- https://serverlessland.com/patterns/eventbridge-pipes-sqs-to-stepfunctions

- https://marcoml.medium.com/hands-on-aws-step-functions-workflow-with-aws-sam-138a67bb2023

Stay tuned for more. Let’s connect on Linkedin and explore my GitHub for future insights.